A couple of months ago I acquired a stack of Raspberry Pis because they were so hard to get, might as well get it over with. Of course, once I got them they went untouched for weeks. I eventually started selling off part of my stash to other coworkers to help spread the fun. After building the IKEA cluster using 10 mini-itx Atom boards in a HELMER cabinet, the next logical progression would be to build a Pi cluster. I began to wonder what one could even do with such a tiny rig (it’s fantastic for learning Chef or Puppet system automation), and half jokingly wondered if it could run Hadoop.

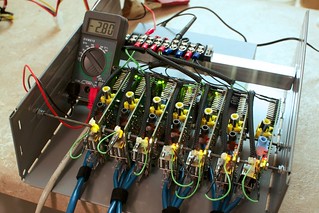

A Pi cluster is not new, people have done this before. Unfortunately it usually involves a gigantic stack of power strips and USB power adapters. I wanted to get rid of all of that and because I knew the Pi can be powered via USB or header, so I wired up a small power bus. Now, multiple Pis are powered by a standard Molex drive connector (which gives you 5V on the red/black wires) using a leftover ATX PSU. By my measurement, each Pi draws 400 mA, but I don’t know if/what the real limits are of the 5V output of the PSU though. It’s vague and hand-wavy, but I do know I haven’t yet burned my apartment down.

I had leftover IKEA HELMER drawers from the last project, which are basically 9.5″ wide, 4″ tall, and trimmed to 11″ deep. This perfectly fits 8-9 Raspberry Pis and a Netgear switch (PSU is elsewhere), but such a small switch only provides 8 switch ports with one of those ate for uplink, putting me at 7 RPis for my cluster. There doesn’t seem to be a compact 10/12/16 port switch that fits into the drawer so sadly some potential is lost.

I had leftover IKEA HELMER drawers from the last project, which are basically 9.5″ wide, 4″ tall, and trimmed to 11″ deep. This perfectly fits 8-9 Raspberry Pis and a Netgear switch (PSU is elsewhere), but such a small switch only provides 8 switch ports with one of those ate for uplink, putting me at 7 RPis for my cluster. There doesn’t seem to be a compact 10/12/16 port switch that fits into the drawer so sadly some potential is lost.

The project wasn’t without setbacks, finding a simple way to mount them all took work. At first I tried stringing them up on pieces of coat hanger wire, but this was too flimsy to be of any use. The ethernet cabling wanted to pull everything around despite being zip-tied in place. I tried the threaded road approach this time. The holes in the board are really intended for fabrication and not mounting swears the RPi foundation, so nothing standard size fits through them. Finally in the back of the hardware store I found one 3′ piece of 4-40 (I think!) threaded rod that fit. It was a complete pain in the ass to thread 7 boards between a sandwich of nuts at the same time even with a drill to do the twisting, but the result was worth it after mounted in the drawer. The Pis are mounted solid and are eaaaaasy to work around now.

Yes, if you blow one in the middle you’ll have to disassemble it to get it out, this happened to me before I started using the rod. The coat hanger solution was so shitty that somehow I shorted something and grey smoke rolled off a RPi. I took it out and later tested it, the little sucker STILL BOOTS. I have no idea what I fried on it, but they’re more resilient than I expected.

For power cabling, I used some breadboard jumper wires I had laying around. Cut them in half, the header plugs onto the RPi, other ends get soldered into a terminal. Instead of switches to power off individual RPis, I happened to have 3″ male/female jumpers which were perfect to loop up front to plug/unplug power conveniently. Alas I can’t get to the HDMI ports, I figured access to GPIO pins were more important.

Fry’s had a great sale the other day on 32 GB SDHC cards ($10!) so I maxed out most of the boards with storage. Ethernet is just a 1′ cat 5 (cat 6 is too stiff really) patch that’s looped and velcro’d in place.

All in all, the boards together draw 2.8 A at 5 VDC when running a UDP iperf test. The AC outlet meter continuously shows it drawing 30 W however, so I think this indicates overhead/inefficiency in the PSU. Best of all, it’s PERFECTLY SILENT. No fans, the heat it does give off is barely noticeable. It just sits there like this bizzaro computation toy, blinking away.

On to Hadoop!

Hadoop + HDFS + MapReduce

Why would you do such a silly thing? To see if it can be done!

I still run Raspbian on the boards, which is basically Debian 6. I haven’t had any particular problems building or getting normal packages to work on the RPi. It just works and cranks away like an old Celeron. There was doubt amongst others if Java would even work on them, be it memory consumption or ARM compatibility. The Raspbian distro includes OpenJDK which seems to work just fine.

Finally one night after I got all of the basic system Chef recipes ironed out I decided to just try installing Hadoop to see what would happen. In the course of my day job I don’t administer Hadoop infrastructure and only have a casual idea of how it all fits together, so it was all completely brand new to me. Being the impatient type I started rage googling and quickly found excellent tutorials on how to install Hadoop on single node and multi nodes by Michael Noll. The official Hadoop quick start guide seems sort of lacking in a few places, but his are complete and informative in the process. There were a few bumps, but nothing installing more packages or googling couldn’t solve quickly.

I think I got the cluster up and working with a couple of hours, start to finish, complete with HDFS. Get a single node working first, that’s 85% of the work done. The rest is stamping out the single node over and over X nodes, then updating the config to point at one NameNode. Even the included start-dfs script will take care of starting/stopping DataNode services on your slaves for you, so you only have to fiddle with the master.

Suffice to say it’s pretty pokey, but the point is that it works!

- Raw SDHC flash card speed: 110-115 MB/sec sequential read, 11-12 MB/sec sequential write

- HDFS write (730MB ISO file): 6 MB/sec read, 1.2 MB/sec write (5 minutes to copy an ISO to DFS, 2 minutes to copy back)

Getting MapReduce took some tuning because the default Java heap size is 1 G (ha!). Interestingly I was still able to run an example MR wordcount job from the tutorial on a single RPi with the default heap despite it swapping heavily and dropping some workers. After about 20 minutes later it finished and wrote its results. On the cluster however the example eventually failed after a long time, due to general memory starvation. Tuning the Java heap way down to 256 MB setting made the situation bearable, nodes could finally successfully finish small example MR jobs in a few minutes.

# hadoop.env.sh tweaks: JAVA_HOME=/usr/lib/jvm/default-java export HADOOP_OPTS="-Xmx256m" HADOOP_SSH_OPTS="-o StrictHostKeyChecking=no"

It’s amusing to note all this breaks the Hadoop best practices, going right down the list and snubbing every requirement. Tiny amounts of ram, slow I/O, slow network, slow everything. I guess the only thing going in performance’s favor is the fact that raw reads from SDHC are 10x faster than writes. Having said that, it’s all stock everything so performance can only get better if one really wanted to crawl all over it. In the end it’s still a good exercise in learning how something new works!

It remains to be seen if I keep the RPis in this configuration, or I swap them out later with Cubieboards. Either way, the form factor means it can be inserted into the IKEA cluster cabinet for some “yo dawg I heard you like clusters…” action.

EDIT: MapReduce example with ‘wordcount’:

MapReduce is pretty painful. Here I’m running a MR wordcount job using 2,098,367 bytes (!) of plain text as input … 10 minutes and 32 seconds later the job completes.

hduser@rpi01:/opt/hadoop$ time bin/hadoop jar hadoop*examples*.jar wordcount /user/hduser/txt /user/hduser/txt-out1115 13/03/13 07:20:53 INFO input.FileInputFormat: Total input paths to process : 2 13/03/13 07:20:54 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable 13/03/13 07:20:54 WARN snappy.LoadSnappy: Snappy native library not loaded 13/03/13 07:21:02 INFO mapred.JobClient: Running job: job_201303130634_0003 13/03/13 07:21:03 INFO mapred.JobClient: map 0% reduce 0% 13/03/13 07:22:36 INFO mapred.JobClient: map 1% reduce 0% 13/03/13 07:22:40 INFO mapred.JobClient: map 2% reduce 0% ... 13/03/13 07:26:38 INFO mapred.JobClient: map 98% reduce 16% 13/03/13 07:26:42 INFO mapred.JobClient: map 99% reduce 16% 13/03/13 07:26:47 INFO mapred.JobClient: map 100% reduce 16% 13/03/13 07:28:50 INFO mapred.JobClient: map 100% reduce 33% ... 13/03/13 07:30:02 INFO mapred.JobClient: map 100% reduce 100% 13/03/13 07:30:59 INFO mapred.JobClient: Job complete: job_201303130634_0003 13/03/13 07:30:59 INFO mapred.JobClient: Counters: 30 13/03/13 07:30:59 INFO mapred.JobClient: Job Counters 13/03/13 07:30:59 INFO mapred.JobClient: Launched reduce tasks=1 13/03/13 07:30:59 INFO mapred.JobClient: SLOTS_MILLIS_MAPS=741642 13/03/13 07:30:59 INFO mapred.JobClient: Total time spent by all reduces waiting after reserving slots (ms)=0 13/03/13 07:30:59 INFO mapred.JobClient: Total time spent by all maps waiting after reserving slots (ms)=0 13/03/13 07:30:59 INFO mapred.JobClient: Rack-local map tasks=1 13/03/13 07:30:59 INFO mapred.JobClient: Launched map tasks=3 13/03/13 07:30:59 INFO mapred.JobClient: Data-local map tasks=2 13/03/13 07:30:59 INFO mapred.JobClient: SLOTS_MILLIS_REDUCES=276932 13/03/13 07:30:59 INFO mapred.JobClient: File Output Format Counters 13/03/13 07:30:59 INFO mapred.JobClient: Bytes Written=470902 13/03/13 07:30:59 INFO mapred.JobClient: FileSystemCounters 13/03/13 07:30:59 INFO mapred.JobClient: FILE_BYTES_READ=733821 13/03/13 07:30:59 INFO mapred.JobClient: HDFS_BYTES_READ=2098588 13/03/13 07:30:59 INFO mapred.JobClient: FILE_BYTES_WRITTEN=1539326 13/03/13 07:30:59 INFO mapred.JobClient: HDFS_BYTES_WRITTEN=470902 13/03/13 07:30:59 INFO mapred.JobClient: File Input Format Counters 13/03/13 07:30:59 INFO mapred.JobClient: Bytes Read=2098367 13/03/13 07:30:59 INFO mapred.JobClient: Map-Reduce Framework 13/03/13 07:30:59 INFO mapred.JobClient: Map output materialized bytes=733827 13/03/13 07:30:59 INFO mapred.JobClient: Map input records=44877 13/03/13 07:30:59 INFO mapred.JobClient: Reduce shuffle bytes=733827 13/03/13 07:30:59 INFO mapred.JobClient: Spilled Records=102008 13/03/13 07:30:59 INFO mapred.JobClient: Map output bytes=3474212 13/03/13 07:30:59 INFO mapred.JobClient: CPU time spent (ms)=518320 13/03/13 07:30:59 INFO mapred.JobClient: Total committed heap usage (bytes)=413401088 13/03/13 07:30:59 INFO mapred.JobClient: Combine input records=361196 13/03/13 07:30:59 INFO mapred.JobClient: SPLIT_RAW_BYTES=221 13/03/13 07:30:59 INFO mapred.JobClient: Reduce input records=51004 13/03/13 07:30:59 INFO mapred.JobClient: Reduce input groups=44447 13/03/13 07:30:59 INFO mapred.JobClient: Combine output records=51004 13/03/13 07:30:59 INFO mapred.JobClient: Physical memory (bytes) snapshot=397709312 13/03/13 07:30:59 INFO mapred.JobClient: Reduce output records=44447 13/03/13 07:30:59 INFO mapred.JobClient: Virtual memory (bytes) snapshot=1056927744 13/03/13 07:30:59 INFO mapred.JobClient: Map output records=361196 real 10m32.817s user 0m32.830s sys 0m1.950s

So yeah, it works, just veeeeery slowly.

I will have RS2 farms as yours environment, and try to look how does it work?

I agree RS is very slow, but I am loooking for RS2 if any possibility that applying to openstack? hadoop batch? multi-cralwing…

thanks for your sharing, it helps me a lot.